Research Projects

My research spans the areas of design theory, human-computer interaction, and information visualization. I am interested in the creative design process, specifically in supporting early-stage design with a “computer-as-partner” framework, i.e., treating the computer as a collaborator rather than as a medium or tool. To achieve this, it is important to first understand how designers think through their own ideas, and how they collaborate. The projects below are part of my doctoral and post-doctoral research.

Analyzing Design Processes using Visual Analytics

Design protocol studies are often used to understand how designers think, communicate, and collaborate, by studying audio or video records, and transcripts of design sessions. They sometimes augment these records with alternate representations of design activity captured through sketches, server logs, or even activity trackers. VizScribe is a web-based visual analytics framework to help make sense of these multiple datasets by allowing the researcher to explore the data through multiple coordinated visualizations, and code the transcript with their observations. VizScribe uses multiple coordinated timeline views of transcript, sketching, and activity tracker data, aligned with a video timeline of the design session. To customize or extend VizScribe to suit your temporal data, you can download the source code, and refer to the Wiki to edit the visualizations to suit your purposes. Please see the video below for a detailed demonstration.

Extending VizScribe's concept to focus on text data, my current project is to explore the application of VA to Grounded Theory. Grounded theory is a research methodology that involves inductively analyzing analyzing qualitative, text-based data to generate theoretical constructs from within the data. Drawing parallels between this method and the inductive, visual sensemaking loop of moving from data to insights that motivates VA, I developed a prototype that extracts text metadata using natural language processing (NLP) techniques, and uses VA to help the analyst use grounded theory to make sense of the data. For details, please see the below video.

RELEVANT PUBLICATIONS

Integrating visual analytics support for grounded theory practice in qualitative text analysis.

IEEE EuroVis 2017 (in review)

VizScribe: A visual Analytics approach to understand designer behavior

International Journal of Human-Computer Studies, 100,

pp.66–80, 2017.

PDF |

VIDEO |

GITHUB |

WIKI

Understanding brainstorming through text visualization

ASME IDETC/CIE Conference, Portland, Oregon, 2013.

PDF

Digital Support for Sketching in Design Ideation

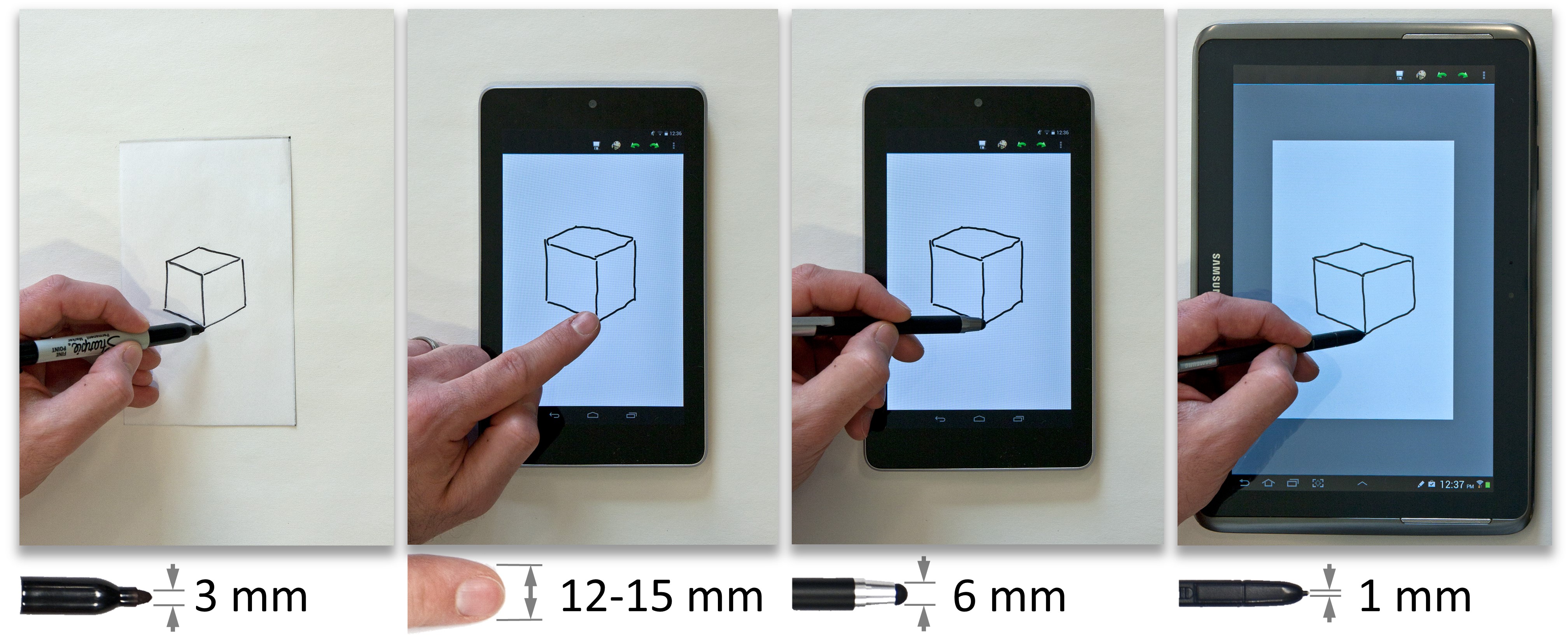

Digital support for sketching with hand-held computing devices and digital styli usually focus on emulating pen and paper. However, to best support early-stage design, we need to augment the versatility of pen and paper with the computing power of digital support tools. Comparative sketching studies between pen-and-paper and stylus-tablet media that we conducted showed a preference for the sharp-tipped "active pen" (on the right of the image below) for tracing and sketching activities.

Team contribution during design ideation has been shown to often increase the originality of an existing idea. I was part of the team that developed skWiki, a web application framework for collaborative sketching. skWiki aids design exploration through branching and merging operations between designers, so they can create alternate versions of an idea, or combine two or more ideas. These versions connect to earlier versions instead of replacing them, through skWiki’s path viewer that shows all file states connected by operations. For details, see the video below.

A longitudinal study with design students showed that this path viewer supported cognitive lateral and vertical transformations that help create alternatives and add details, which are vital to creative design. The path viewer also made these cognitive transformations visible to the design researcher, thus supporting them in studying design collaboration.

RELEVANT PUBLICATIONS

Collaborative sketching with skWiki: a case study

ASME IDETC/CIE Conference, Buffalo, NY, 2014.

PDF

Tracing and sketching performance using blunt-tipped styli on direct-touch tablets

Sriram Karthik Badam,

ACM AVI Conference, pp.193-200, Como, Italy, 2014.

PDF

skWiki: A multimedia sketching system for collaborative creativity

Zhenpeng Zhao, Sriram Karthik Badam,

ACM CHI Conference, pp.1235-1244, Toronto Canada, 2014.

PDF |

VIDEO |

GITHUB

Design Projects

Some design projects I worked on as part of my graduate courses included proofs-of-concept, product redesign, and robotics, all of which were great fun.

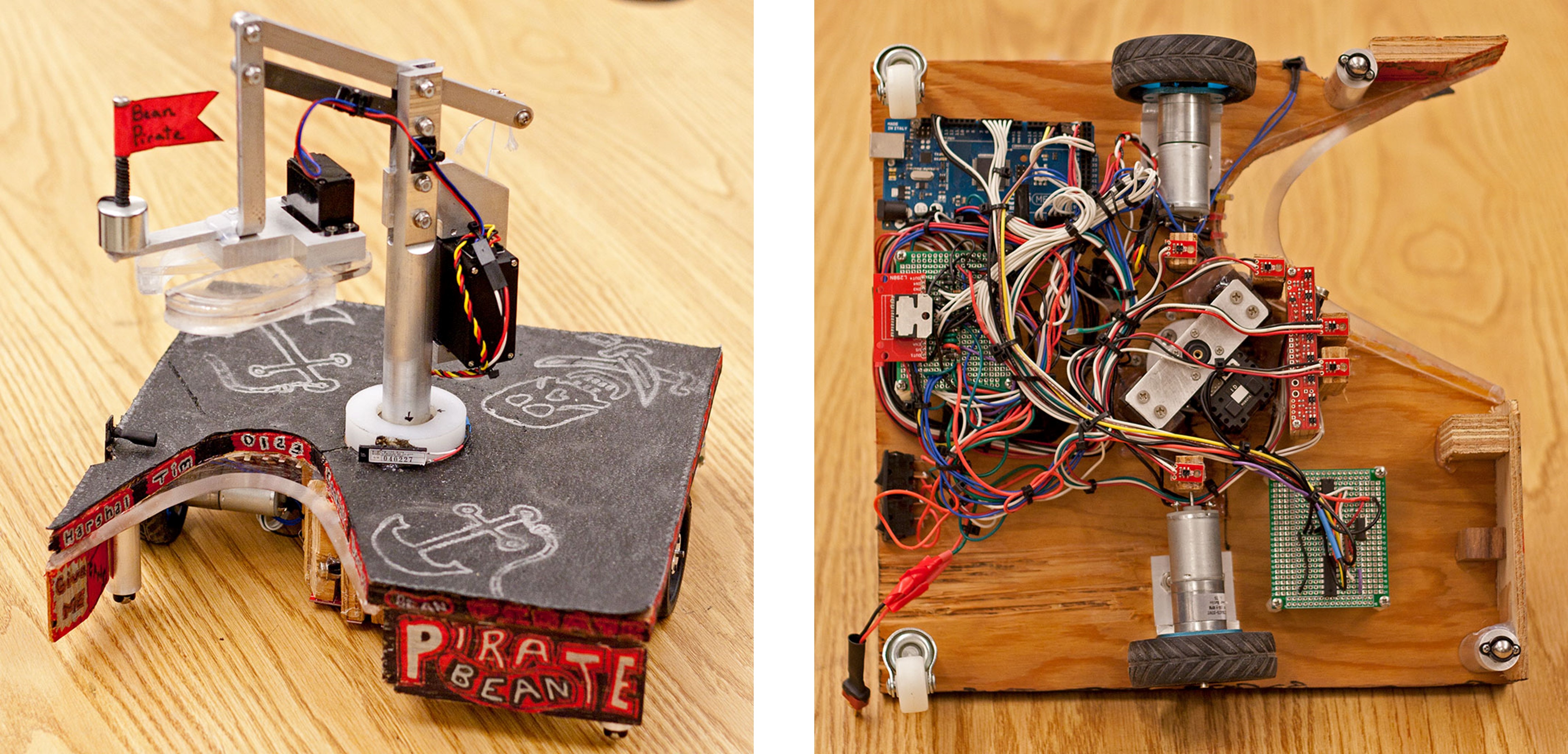

Autonomous Can-Collecting Robot

This project was part of a graduate course on mechatronics. The task assigned was to develop an autonomous robot to locate five "barrels of toxic chemicals", scaled down and represented in this case by five half-pound cans of black beans. The robot was to locate these cans, pick them up, and dispose of them at a specific disposal site. The robot was also to identify a can that was at a lower temperature, and dispose of it last. The task was simulated in a 7 x 7 ft field, and the robot needed to have a footprint no larger than 12 x 12 inches. The field had paths to the "toxic waste area" and to the disposal area marked with black tape.

We used a crane-type model with a gripper at the end of the crane arm to pick up the cans. The boom assembly was allowed to rotate about a vertical axis by an sluminum spindle mounted on a thrust bearing. Servo motors were used for the boom rotation, the winch, and the gripper. We used line sensors to follow the marked path, and a photoresistor to detect the disposal site. The cans were detected using a laser "trip wire" on the front of the robot, while an infrared temperature sensor was used to detect the cold can. We used an Arduino Mega microcontroller to program the robot. Overall, the robot worked well, except for a faulty line of code that prevented the winch from lowering the cans into the disposal area. You can see the robot in action, and hear our wails of anguish in the video below.

For a detailed report and the source code, click on the links below.

REPORT | SOURCE CODE (ZIP FILE)

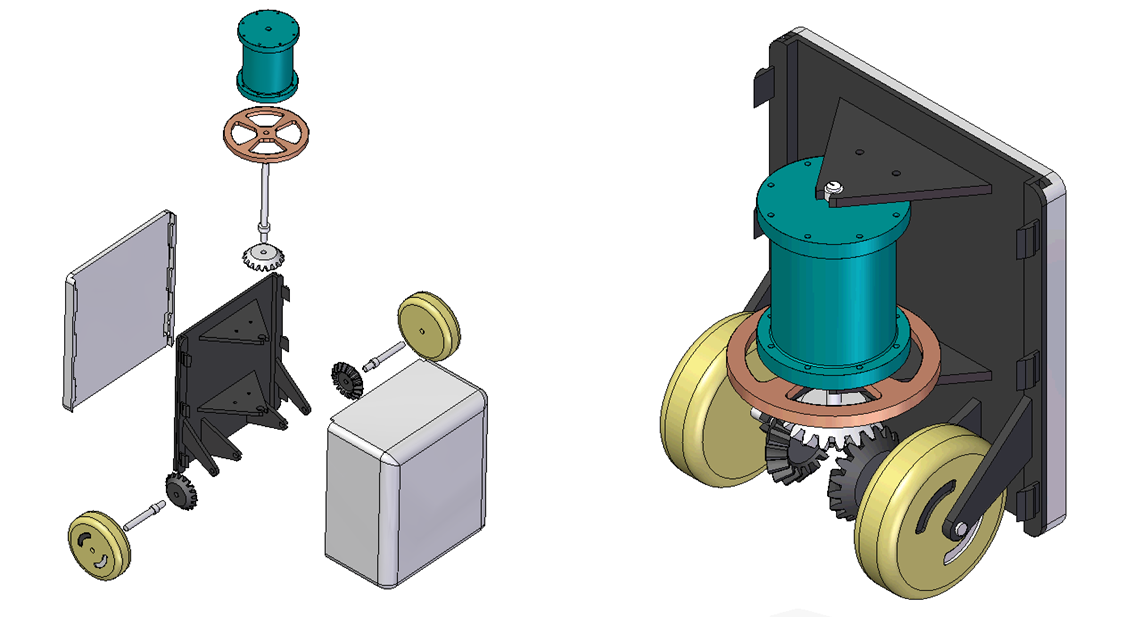

Power-Generating Door

This project was part of a graduate product design course where we were given free rein to design an original product and create a digital or physical mock-up. Our design was a modular power-generation device that can be mounted to the bottom of a door. We designed a modular unit that could be bolted to any door, with a simple height adjustment to ensure wheel-floor contact. The plastic cases were designed keeping in mind injection molding considerations. Finally, an overrunning clutch design on the wheels allowed the flywheel to rotate in the same direction regardless of the direction of movement of the door (opening/closing).

For more details, see the attached presentation/report.

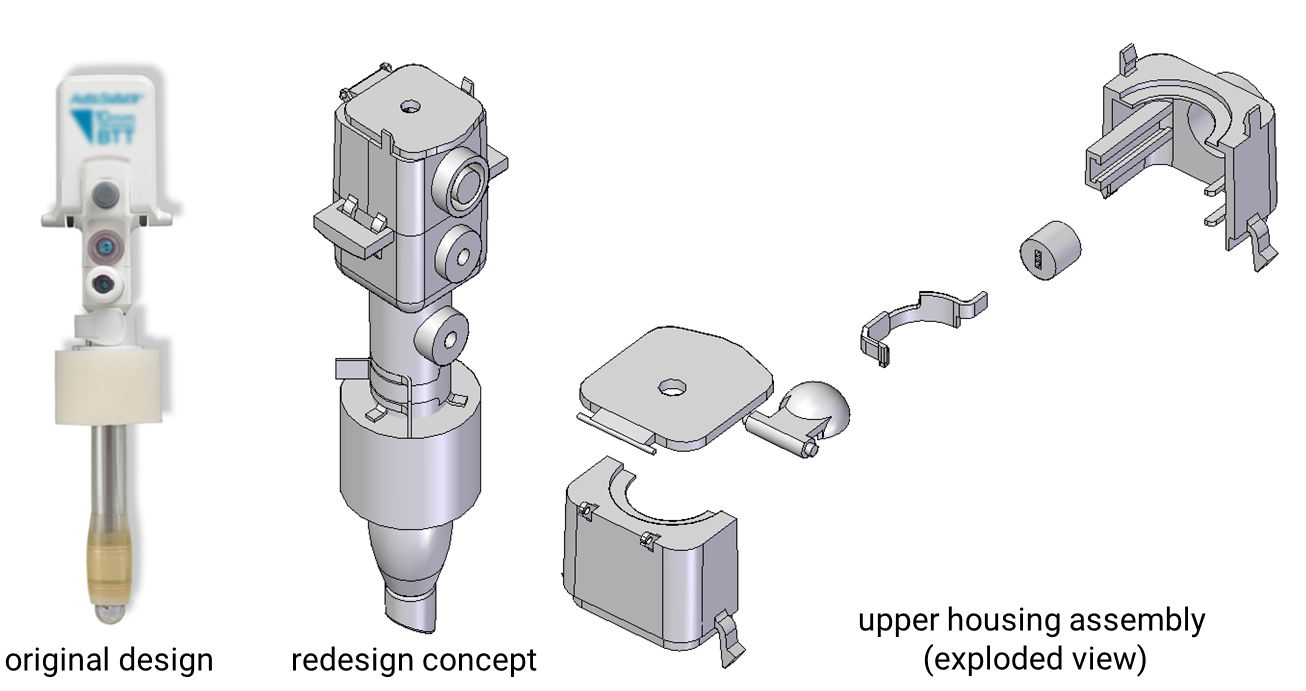

Insufflator Redesign

This project was part of a graduate design for manufacturability course, where the goal was to analyze an existing product, and redesign it with the goal of improving manufacturability. Our assigned product was an insufflator: a device used in laparoscopic surgery to inflate the body cavity, and allow the insertion of tools. More specifically, the goal was to simplify the assembly process by using fewer, easy-to-assemble parts.

By combining parts with no relative motion, and used flexible adapters to replace multiple instrument adapters, bringing the part count down from 33 to 20. A 3D-printed prototype with a cutaway was created to illustrate the assembled components. This redesign was conceptual only, and a result of necessary oversimplification of the product requirements. For details, see the attached presentation and report.